// publications

Published Work

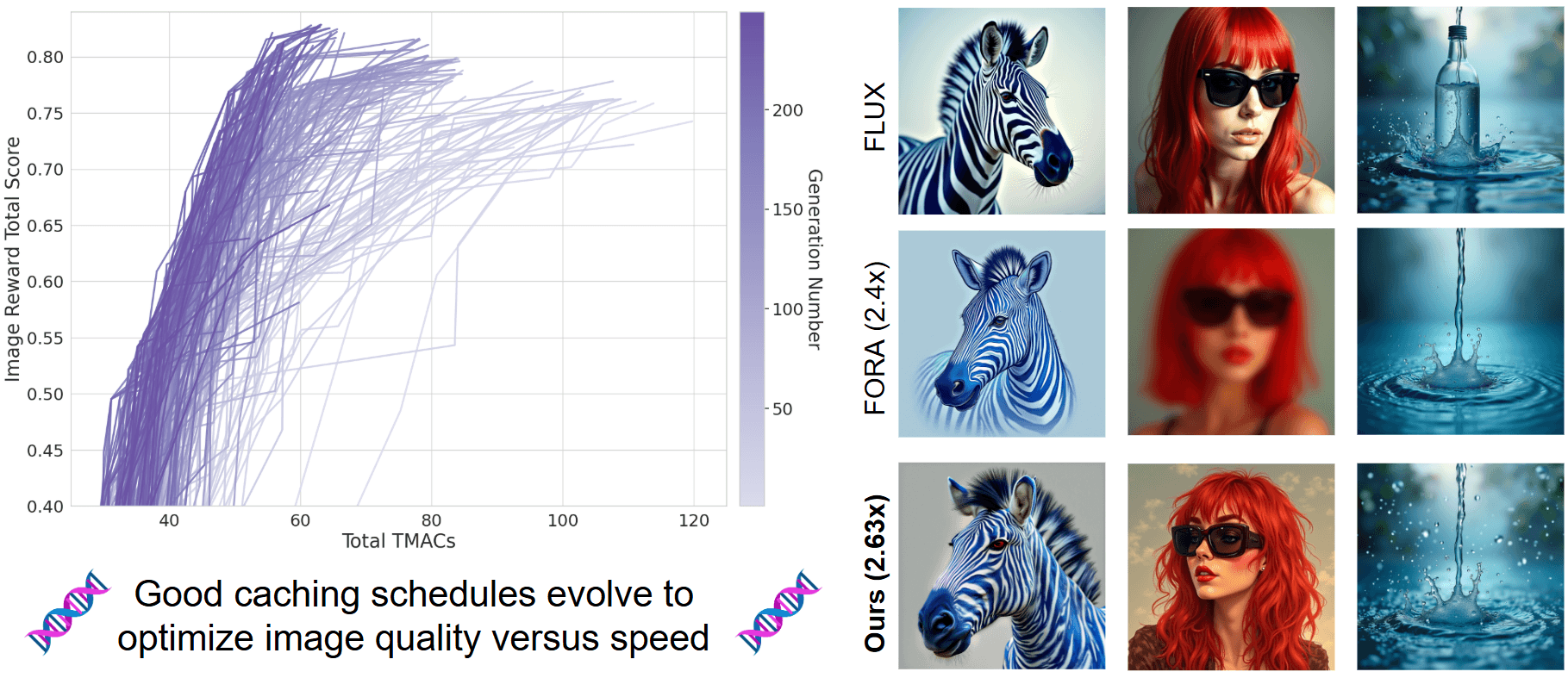

Evolutionary Caching to Accelerate Your Off-the-Shelf Diffusion Model

Anirud Aggarwal, Abhinav Shrivastava, Matthew Gwilliam

International Conference on Learning Representations (ICLR) 2026

We introduce ECAD, an evolutionary algorithm to automatically discover efficient caching schedules for accelerating diffusion-based image generation models. ECAD achieves faster than state-of-the-art speed and higher quality among training-free methods and generalizes across models and resolutions.

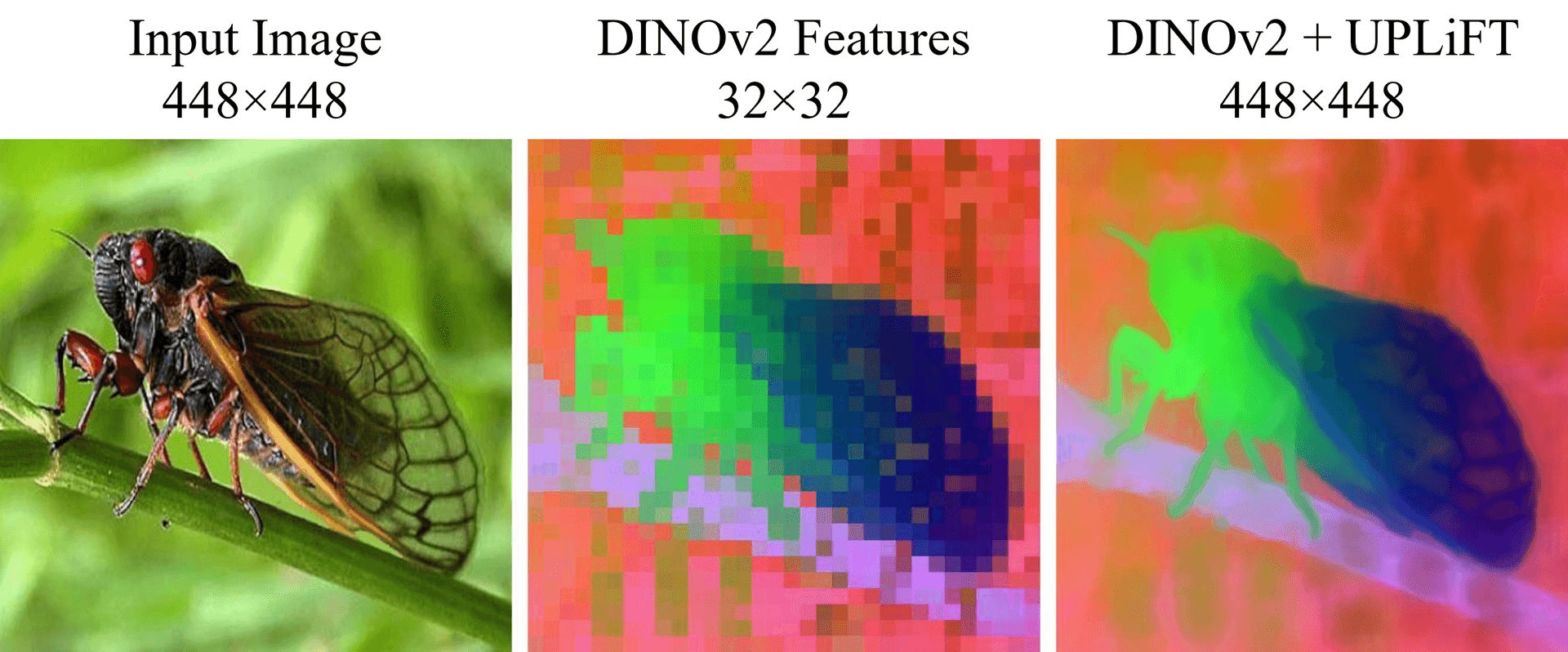

UPLiFT: Efficient Pixel-Dense Feature Upsampling with Local Attenders

Matthew Walmer, Saksham Suri, Anirud Aggarwal, Abhinav Shrivastava

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 2026

We introduce UPLiFT, a lightweight, iterative feature upsampler that converts coarse ViT and VAE features into pixel-dense representations using a fully local attention operator. It achieves state-of-the-art performance on segmentation and depth tasks while scaling linearly in visual tokens, and extends naturally to generative tasks for efficient image upscaling.

conf: 0.96